What else can you do with BXA? Check out this session to see some other interesting applications.

Key objectives:

- Quantify the gain in information acquired on a parameter after fitting

- Include custom priors in BXA fits

- Speed up BXA runtimes for fits with lots of parameters using step samplers

Exercise 5.1 - information gain

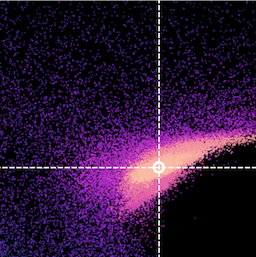

Did we gain any information in a given parameter by fitting? Or was the parameter posterior effectively just its prior?Here we will estimate the Kullback–Leibler (K-L) divergence for the PhoIndex parameter in model3 for two different priors.

- Use the K-L equation in Buchner et al., (2022) to estimate the K-L divergence for your fit to

model3from Exercise 1.3:N = np.sum(posterior) M = np.sum(prior) dkl_tot = 0. for i, b in enumerate(posterior): if b == 0.: continue dkl = (b / N) * np.log2((b / N) / (prior[i] / M)) if dkl < 0.: dkl = 0. dkl_tot += dkl - How many bits of information were gained from using a uniform prior for

PhoIndexinmodel3? - Next try fitting

model3with the following Gaussian prior onPhoIndex:bxa.create_gaussian_prior_for(model3, model3.zpowerlw.PhoIndex, 1.8, 0.15)

- Were more bits of information gained from using a uniform prior for

PhoIndexor a Gaussian prior? Why?

- You can create custom priors in BXA with

create_custom_prior_for()(see example_advanced_prior.py for an example). - Cross-calibration constants cannot be negative and can (in theory) vary over orders of magnitude. However, if the instruments are operating as expected one would expect the cross-calibration to be approximately consistent with unity. Create a custom log-Gaussian prior for the cross-calibration between NuSTAR and Chandra introduced in Exercise 1.5:

def custom_log_gaussian_prior(u): """ Gaussian prior in the log (base 10) of the parameter """ logx = scipy.stats.norm(0., 0.03).ppf(u) x = 10 ** (logx) return x prior = bxa.create_custom_prior_for(AllModels(2), AllModels(2)(1), custom_log_gaussian_prior) - Use this prior to generate new BXA fits for

model1,model2andmodel3. Did the runtime change? Has the posterior changed for the cross-calibration? Why or why not?

Exercise 5.3 - speeding up BXA

For complex fits (e.g., multiple datasets simultaneously, many parameters, high signal-to-noise spectra, ...), BXA supports a number of settings and features for reducing the overall runtime.- Use the built in parallelisation with MPI (Message Passing Interface) to run a new fit with BXA using one of the models:

mpiexec -np 4 python3 myscript.py - Run a new fit using the alternative arguments in

solver.run():frac_remain=float: sets the cut-off condition for the integration. A low value for float is optimal for finding peaks but higher values (e.g., 0.5) can be used if the posterior distribution is a relatively simple shape.max_num_improvement_loops=0: sets the number of timesrun()will try to iteratively assess how many more samples are needed.

- Nested sampling requires samples to be drawn from the prior that exceed some likelihood threshold. One method to achieve this is with step samplers, that can be faster for fits with many free parameters (see Buchner+22 for more details). In BXA, step samplers can be implemented with the

speedargument insolver.run():speed="safe"

Reactive Nested Sampler, recommended.speed="auto"

Step sampler with adaptive steps.speed=int

Step sampler with an integer number of steps. This option is faster, but the number of steps should be tested to ensure the Bayesian evidence estimation is accurate.- To see the other arguments of

run(), see here.

- Fit

model1to the NuSTAR data with step samplers of 10, 20 and 30 steps. Is the Bayesian evidence estimation for each stable and consistent with the original value found in Exercise 1.2?